Most read

This page lists the top ten most read articles for this journal based on the number of full text views and downloads recorded on Cambridge Core over the last 90 days. This list is updated on a daily basis.

Contents

Colouring graphs with forbidden bipartite subgraphs

- Part of:

-

- Published online by Cambridge University Press:

- 08 June 2022, pp. 45-67

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Spectral gap in random bipartite biregular graphs and applications

- Part of:

-

- Published online by Cambridge University Press:

- 23 July 2021, pp. 229-267

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Mastermind with a linear number of queries

-

- Published online by Cambridge University Press:

- 08 November 2023, pp. 143-156

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Large monochromatic components in expansive hypergraphs

- Part of:

-

- Published online by Cambridge University Press:

- 05 March 2024, pp. 467-483

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Tight Hamilton cycles with high discrepancy

- Part of:

-

- Published online by Cambridge University Press:

- 30 May 2025, pp. 565-584

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

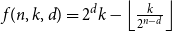

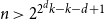

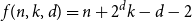

Subspace coverings with multiplicities

- Part of:

-

- Published online by Cambridge University Press:

- 18 May 2023, pp. 782-795

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

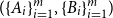

Problems and results on 1-cross-intersecting set pair systems

- Part of:

-

- Published online by Cambridge University Press:

- 24 April 2023, pp. 691-702

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Hypergraphs without complete partite subgraphs

- Part of:

-

- Published online by Cambridge University Press:

- 11 December 2025, pp. 1-4

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A note on digraph splitting

- Part of:

-

- Published online by Cambridge University Press:

- 21 March 2025, pp. 559-564

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Off-diagonal book Ramsey numbers

- Part of:

-

- Published online by Cambridge University Press:

- 09 January 2023, pp. 516-545

-

- Article

-

- You have access

- Open access

- HTML

- Export citation