Refine search

Actions for selected content:

52629 results in Statistics and Probability

Chapter 2 - Toward Improved Workplace Measurement with Passive Sensing Technologies

- from Part I - Foundations

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 46-106

-

- Chapter

- Export citation

Chapter 6 - Mobile Sensing around the Globe

- from Part II - Global Perspectives on Key Methods/Topics

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 176-210

-

- Chapter

- Export citation

Chapter 9 - A European Perspective on Psychometric Measurement Technology

- from Part III - Regional Focus

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 271-307

-

- Chapter

- Export citation

Contributors

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp vii-viii

-

- Chapter

- Export citation

Chapter 12 - Reflections on Testing, Assessment, and the Future

- from Part III - Regional Focus

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 349-359

-

- Chapter

- Export citation

Chapter 10 - Testing and Measurement in North America with a Focus on Transformation

- from Part III - Regional Focus

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 308-325

-

- Chapter

- Export citation

Chapter 1 - Machine Learning Algorithms and Measurement

- from Part I - Foundations

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 11-45

-

- Chapter

- Export citation

Chapter 8 - Technology-Enabled Measurement in Singapore

- from Part III - Regional Focus

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 239-270

-

- Chapter

- Export citation

Chapter 11 - Technology and Measurement Challenges in Education and the Labor Market in South America

- from Part III - Regional Focus

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 326-348

-

- Chapter

- Export citation

Chapter 7 - Technology and Measurement in Asia

- from Part III - Regional Focus

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 213-238

-

- Chapter

- Export citation

Part II - Global Perspectives on Key Methods/Topics

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 129-210

-

- Chapter

- Export citation

Technology and Measurement around the Globe

-

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023

On oriented cycles in randomly perturbed digraphs

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 2 / March 2024

- Published online by Cambridge University Press:

- 08 November 2023, pp. 157-178

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

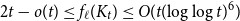

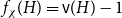

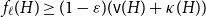

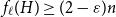

Mastermind with a linear number of queries

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 2 / March 2024

- Published online by Cambridge University Press:

- 08 November 2023, pp. 143-156

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Characteristics of the switch process and geometric divisibility

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 3 / September 2024

- Published online by Cambridge University Press:

- 06 November 2023, pp. 802-809

- Print publication:

- September 2024

-

- Article

- Export citation

Graph-based methods for discrete choice

-

- Journal:

- Network Science / Volume 12 / Issue 1 / March 2024

- Published online by Cambridge University Press:

- 06 November 2023, pp. 21-40

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Skin and soft tissue infection incidence before and during the COVID-19 pandemic

-

- Journal:

- Epidemiology & Infection / Volume 151 / 2023

- Published online by Cambridge University Press:

- 06 November 2023, e190

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

SUBSAMPLING INFERENCE FOR NONPARAMETRIC EXTREMAL CONDITIONAL QUANTILES

-

- Journal:

- Econometric Theory / Volume 41 / Issue 2 / April 2025

- Published online by Cambridge University Press:

- 06 November 2023, pp. 326-340

-

- Article

-

- You have access

- Open access

- Export citation

On the choosability of

$H$-minor-free graphs

$H$-minor-free graphs

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 2 / March 2024

- Published online by Cambridge University Press:

- 03 November 2023, pp. 129-142

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Parametrized polyconvex hyperelasticity with physics-augmented neural networks

- Part of

-

- Journal:

- Data-Centric Engineering / Volume 4 / 2023

- Published online by Cambridge University Press:

- 03 November 2023, e25

-

- Article

-

- You have access

- Open access

- HTML

- Export citation