Refine search

Actions for selected content:

52629 results in Statistics and Probability

Spanning trees in graphs without large bipartite holes

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 3 / May 2024

- Published online by Cambridge University Press:

- 14 November 2023, pp. 270-285

-

- Article

-

- You have access

- HTML

- Export citation

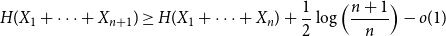

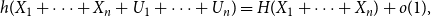

Approximate discrete entropy monotonicity for log-concave sums

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 2 / March 2024

- Published online by Cambridge University Press:

- 13 November 2023, pp. 196-209

-

- Article

- Export citation

Evaluation of phase-adjusted interventions for COVID-19 using an improved SEIR model

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 13 November 2023, e9

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Calibration of transition risk for corporate bonds

-

- Journal:

- British Actuarial Journal / Volume 28 / 2023

- Published online by Cambridge University Press:

- 13 November 2023, e8

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Prevalence and incidence of emergency department presentations and hospital separations with injecting-related infections in a longitudinal cohort of people who inject drugs

-

- Journal:

- Epidemiology & Infection / Volume 151 / 2023

- Published online by Cambridge University Press:

- 13 November 2023, e192

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Association between face mask use and risk of SARS-CoV-2 infection: Cross-sectional study

-

- Journal:

- Epidemiology & Infection / Volume 151 / 2023

- Published online by Cambridge University Press:

- 13 November 2023, e194

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

PERFORMANCE OF EMPIRICAL RISK MINIMIZATION FOR LINEAR REGRESSION WITH DEPENDENT DATA

-

- Journal:

- Econometric Theory / Volume 41 / Issue 2 / April 2025

- Published online by Cambridge University Press:

- 10 November 2023, pp. 391-420

-

- Article

-

- You have access

- Open access

- Export citation

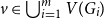

A special case of Vu’s conjecture: colouring nearly disjoint graphs of bounded maximum degree

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 2 / March 2024

- Published online by Cambridge University Press:

- 10 November 2023, pp. 179-195

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Statistics and Data Visualization in Climate Science with R and Python

-

- Published online:

- 09 November 2023

- Print publication:

- 30 November 2023

Applying Benford's Law for Assessing the Validity of Social Science Data

-

- Published online:

- 09 November 2023

- Print publication:

- 23 November 2023

Part III - Regional Focus

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 211-359

-

- Chapter

- Export citation

Chapter 3 - Information Privacy

- from Part I - Foundations

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 107-128

-

- Chapter

- Export citation

Contents

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp v-vi

-

- Chapter

- Export citation

Chapter 5 - Game-Based Assessment around the Globe

- from Part II - Global Perspectives on Key Methods/Topics

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 157-175

-

- Chapter

- Export citation

Chapter 4 - Social Media Assessments around the Globe

- from Part II - Global Perspectives on Key Methods/Topics

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 131-156

-

- Chapter

- Export citation

Index

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 360-370

-

- Chapter

- Export citation

Introduction

-

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 1-8

-

- Chapter

- Export citation

Evaluation of global sensitivity analysis methods for computational structural mechanics problems

-

- Journal:

- Data-Centric Engineering / Volume 4 / 2023

- Published online by Cambridge University Press:

- 09 November 2023, e28

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Copyright page

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp iv-iv

-

- Chapter

- Export citation

Part I - Foundations

-

- Book:

- Technology and Measurement around the Globe

- Published online:

- 08 November 2023

- Print publication:

- 09 November 2023, pp 9-128

-

- Chapter

- Export citation