Refine search

Actions for selected content:

212971 results in Engineering

8 - Ion Implantation

-

- Book:

- Integrated Circuit Fabrication

- Published online:

- 01 December 2023

- Print publication:

- 16 November 2023, pp 380-447

-

- Chapter

- Export citation

Effects of antagonistic muscle actuation on the bilaminar structure of ray-finned fish in propulsion

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 16 November 2023, A23

-

- Article

- Export citation

Forced oscillations of a cylinder in the flow of viscoelastic fluids

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 16 November 2023, A28

-

- Article

- Export citation

3 - Economic Incentive

- from Part I - Cryptoeconomics Basics

-

- Book:

- Cryptoeconomics

- Published online:

- 06 January 2024

- Print publication:

- 16 November 2023, pp 39-50

-

- Chapter

- Export citation

4 - Semiconductor Manufacturing: Cleanrooms, Wafer Cleaning, Gettering and Chip Yield

-

- Book:

- Integrated Circuit Fabrication

- Published online:

- 01 December 2023

- Print publication:

- 16 November 2023, pp 111-158

-

- Chapter

- Export citation

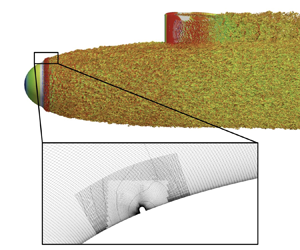

A POD-mode-augmented wall model and its applications to flows at non-equilibrium conditions

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 16 November 2023, A24

-

- Article

- Export citation

Tripping effects on model-scale studies of flow over the DARPA SUBOFF

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 15 November 2023, A3

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Detailed characterization of kHz-rate laser-driven fusion at a thin liquid sheet with a neutron detection suite

- Part of

-

- Journal:

- High Power Laser Science and Engineering / Volume 12 / 2024

- Published online by Cambridge University Press:

- 15 November 2023, e2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Revisiting the role of friction coefficients in granular collapses: confrontation of 3-D non-smooth simulations with experiments

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 15 November 2023, A14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

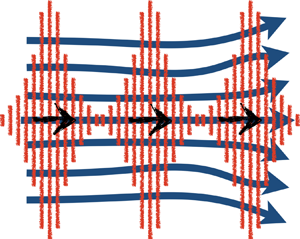

Theory of acoustic streaming for arbitrary Reynolds number flow

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 15 November 2023, A4

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Active frequency selective surfaces: a systematic review for sub-6 GHz band

-

- Journal:

- International Journal of Microwave and Wireless Technologies / Volume 16 / Issue 4 / May 2024

- Published online by Cambridge University Press:

- 15 November 2023, pp. 544-558

-

- Article

- Export citation

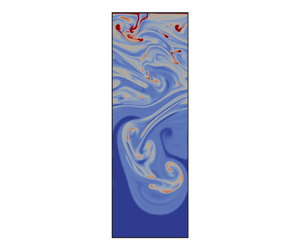

Interacting density fronts in saturated brines cooled from above

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 15 November 2023, A5

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Design, performance and application of a line-imaging velocity interferometer system for any reflector coupled with a streaked optical pyrometer system at the Shenguang-II upgrade laser facility

-

- Journal:

- High Power Laser Science and Engineering / Volume 12 / 2024

- Published online by Cambridge University Press:

- 15 November 2023, e6

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

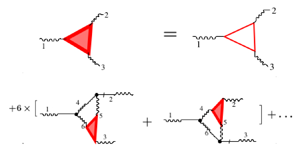

Energy flux and high-order statistics of hydrodynamic turbulence

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 15 November 2023, A17

-

- Article

- Export citation

Predicting turbulent dynamics with the convolutional autoencoder echo state network

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 15 November 2023, A2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Dispersion effects in porous medium gravity currents experiencing local drainage

-

- Journal:

- Journal of Fluid Mechanics / Volume 975 / 25 November 2023

- Published online by Cambridge University Press:

- 14 November 2023, A18

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Dedication

-

- Book:

- Neurocomputational Poetics

- Published by:

- Anthem Press

- Published online:

- 01 March 2024

- Print publication:

- 14 November 2023, pp ix-x

-

- Chapter

- Export citation

4 - Reader and Reading Act Analysis

-

- Book:

- Neurocomputational Poetics

- Published by:

- Anthem Press

- Published online:

- 01 March 2024

- Print publication:

- 14 November 2023, pp 87-112

-

- Chapter

- Export citation

Frontmatter

-

- Book:

- Neurocomputational Poetics

- Published by:

- Anthem Press

- Published online:

- 01 March 2024

- Print publication:

- 14 November 2023, pp i-iv

-

- Chapter

- Export citation

5 - Computational Poetics I: Simple Applications

-

- Book:

- Neurocomputational Poetics

- Published by:

- Anthem Press

- Published online:

- 01 March 2024

- Print publication:

- 14 November 2023, pp 113-140

-

- Chapter

- Export citation