Refine search

Actions for selected content:

52666 results in Statistics and Probability

A value-at-risk approach to mis-estimation risk (Discussion)

-

- Journal:

- British Actuarial Journal / Volume 27 / 2022

- Published online by Cambridge University Press:

- 24 January 2022, e3

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A stochastic process on a network with connections to Laplacian systems of equations

- Part of

-

- Journal:

- Advances in Applied Probability / Volume 54 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 21 January 2022, pp. 254-278

- Print publication:

- March 2022

-

- Article

- Export citation

The impact of meteorological factors and PM2.5 on COVID-19 transmission

-

- Journal:

- Epidemiology & Infection / Volume 150 / 2022

- Published online by Cambridge University Press:

- 21 January 2022, e38

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Your reserves may be best estimate, but are they valid?

-

- Journal:

- British Actuarial Journal / Volume 27 / 2022

- Published online by Cambridge University Press:

- 20 January 2022, e2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

THE SAINT MODEL: A DECADE LATER

-

- Journal:

- ASTIN Bulletin: The Journal of the IAA / Volume 52 / Issue 2 / May 2022

- Published online by Cambridge University Press:

- 20 January 2022, pp. 483-517

- Print publication:

- May 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

NONPARAMETRIC ESTIMATION OF GENERALIZED TRANSFORMATION MODELS WITH FIXED EFFECTS

-

- Journal:

- Econometric Theory / Volume 39 / Issue 2 / April 2023

- Published online by Cambridge University Press:

- 20 January 2022, pp. 357-388

-

- Article

- Export citation

AI: Coming of age?

-

- Journal:

- Annals of Actuarial Science / Volume 16 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 19 January 2022, pp. 1-5

-

- Article

-

- You have access

- HTML

- Export citation

Connection between two historical tuberculosis outbreak sites in Japan, Honshu, by a new ancestral Mycobacterium tuberculosis L2 sublineage

-

- Journal:

- Epidemiology & Infection / Volume 150 / 2022

- Published online by Cambridge University Press:

- 19 January 2022, e56

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The value of network data confirmed by the Covid-19 epidemic and its expanded usages

-

- Journal:

- Data & Policy / Volume 4 / 2022

- Published online by Cambridge University Press:

- 19 January 2022, e4

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The generalized join the shortest orbit queue system: stability, exact tail asymptotics and stationary approximations

-

- Journal:

- Probability in the Engineering and Informational Sciences / Volume 37 / Issue 1 / January 2023

- Published online by Cambridge University Press:

- 19 January 2022, pp. 154-191

-

- Article

- Export citation

An ergodic theorem for the weighted ensemble method

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 59 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 18 January 2022, pp. 152-166

- Print publication:

- March 2022

-

- Article

- Export citation

Rate of strong convergence to Markov-modulated Brownian motion

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 59 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 18 January 2022, pp. 1-16

- Print publication:

- March 2022

-

- Article

- Export citation

Evaluating ex situ rates of carbon dioxide flux from northern Borneo peat swamp soils

-

- Journal:

- Experimental Results / Volume 3 / 2022

- Published online by Cambridge University Press:

- 18 January 2022, e4

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

On the continuity of Pickands constants

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 59 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 18 January 2022, pp. 187-201

- Print publication:

- March 2022

-

- Article

- Export citation

How a probabilistic analogue of the mean value theorem yields stein-type covariance identities

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 59 / Issue 2 / June 2022

- Published online by Cambridge University Press:

- 18 January 2022, pp. 350-365

- Print publication:

- June 2022

-

- Article

- Export citation

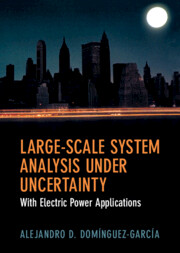

Large-Scale System Analysis Under Uncertainty

- With Electric Power Applications

-

- Published online:

- 17 January 2022

- Print publication:

- 17 February 2022

Identification and description of patients with multisystem inflammatory syndrome in adults associated with SARS-CoV-2 infection using the Premier Healthcare Database

-

- Journal:

- Epidemiology & Infection / Volume 150 / 2022

- Published online by Cambridge University Press:

- 17 January 2022, e26

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Intersections of random sets

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 59 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 17 January 2022, pp. 131-151

- Print publication:

- March 2022

-

- Article

- Export citation

Data protection for the common good: Developing a framework for a data protection-focused data commons

-

- Journal:

- Data & Policy / Volume 4 / 2022

- Published online by Cambridge University Press:

- 17 January 2022, e3

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A note on the Screaming Toes game

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 59 / Issue 1 / March 2022

- Published online by Cambridge University Press:

- 17 January 2022, pp. 118-130

- Print publication:

- March 2022

-

- Article

- Export citation