Refine search

Actions for selected content:

52383 results in Statistics and Probability

Mass gathering events and undetected transmission of SARS-CoV-2 in vulnerable populations leading to an outbreak with high case fatality ratio in the district of Tirschenreuth, Germany

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 13 October 2020, e252

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Covering and tiling hypergraphs with tight cycles

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 30 / Issue 2 / March 2021

- Published online by Cambridge University Press:

- 13 October 2020, pp. 288-329

-

- Article

- Export citation

Tight Hamilton cycles in cherry-quasirandom 3-uniform hypergraphs

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 30 / Issue 3 / May 2021

- Published online by Cambridge University Press:

- 12 October 2020, pp. 412-443

-

- Article

-

- You have access

- Open access

- Export citation

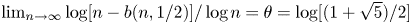

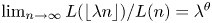

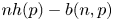

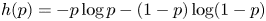

ASYMPTOTIC ANALYSIS OF PERES’ ALGORITHM FOR RANDOM NUMBER GENERATION

-

- Journal:

- Probability in the Engineering and Informational Sciences / Volume 36 / Issue 2 / April 2022

- Published online by Cambridge University Press:

- 12 October 2020, pp. 341-356

-

- Article

- Export citation

Sharp bounds for decomposing graphs into edges and triangles

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 30 / Issue 2 / March 2021

- Published online by Cambridge University Press:

- 12 October 2020, pp. 271-287

-

- Article

-

- You have access

- Open access

- Export citation

LAPLACE BOUNDS APPROXIMATION FOR AMERICAN OPTIONS

-

- Journal:

- Probability in the Engineering and Informational Sciences / Volume 36 / Issue 2 / April 2022

- Published online by Cambridge University Press:

- 09 October 2020, pp. 514-547

-

- Article

- Export citation

First observations of Polar Mesospheric Echoes at both 31 MHz and 53.5 MHz over Svalbard (78.2°N 15.1°E)

-

- Journal:

- Experimental Results / Volume 1 / 2020

- Published online by Cambridge University Press:

- 09 October 2020, e44

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Candida albicans isolated from denture-related stomatitis in elderly patients: Antifungal susceptibility and production of virulence attributes

-

- Journal:

- Experimental Results / Volume 1 / 2020

- Published online by Cambridge University Press:

- 09 October 2020, e43

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A predictive model for Covid-19 spread – with application to eight US states and how to end the pandemic

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 08 October 2020, e249

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

ESTIMATION OF VOLATILITY FUNCTIONS IN JUMP DIFFUSIONS USING TRUNCATED BIPOWER INCREMENTS

-

- Journal:

- Econometric Theory / Volume 37 / Issue 5 / October 2021

- Published online by Cambridge University Press:

- 08 October 2020, pp. 926-958

-

- Article

- Export citation

NWS volume 8 issue 4 Cover and Front matter

-

- Journal:

- Network Science / Volume 8 / Issue 4 / December 2020

- Published online by Cambridge University Press:

- 07 October 2020, pp. f1-f3

-

- Article

-

- You have access

- Export citation

NWS volume 8 issue 4 Cover and Back matter

-

- Journal:

- Network Science / Volume 8 / Issue 4 / December 2020

- Published online by Cambridge University Press:

- 07 October 2020, pp. b1-b2

-

- Article

-

- You have access

- Export citation

The use of multiple hypothesis-generating methods in an outbreak investigation of Escherichia coli O121 infections associated with wheat flour, Canada 2016–2017

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 07 October 2020, e265

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Toxoplasmosis and knowledge: what do the Italian women know about?

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 07 October 2020, e256

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

SARS-CoV-2 transmission: a sociological review

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 07 October 2020, e242

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Quantitative Genetics

-

- Published online:

- 06 October 2020

- Print publication:

- 23 April 2020

-

- Textbook

- Export citation

Forecasting the epidemiological trends of COVID-19 prevalence and mortality using the advanced α-Sutte Indicator

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 05 October 2020, e236

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A meta-analytic evaluation of sex differences in meningococcal disease incidence rates in 10 countries

-

- Journal:

- Epidemiology & Infection / Volume 148 / 2020

- Published online by Cambridge University Press:

- 02 October 2020, e246

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Forage plant mixture type interacts with soil moisture to affect soil nutrient availability in the short term

-

- Journal:

- Experimental Results / Volume 1 / 2020

- Published online by Cambridge University Press:

- 02 October 2020, e42

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Learning socio-organizational network structure in buildings with ambient sensing data

-

- Journal:

- Data-Centric Engineering / Volume 1 / 2020

- Published online by Cambridge University Press:

- 02 October 2020, e9

-

- Article

-

- You have access

- Open access

- HTML

- Export citation