Refine search

Actions for selected content:

52627 results in Statistics and Probability

4 - Inferential Methods for Distributional Regression

- from Part II - Statistical Inference in GAMLSS

-

- Book:

- Generalized Additive Models for Location, Scale and Shape

- Published online:

- 22 February 2024

- Print publication:

- 29 February 2024, pp 77-108

-

- Chapter

- Export citation

6 - Coda

-

- Book:

- Subscores

- Published online:

- 22 February 2024

- Print publication:

- 29 February 2024, pp 136-149

-

- Chapter

- Export citation

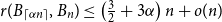

Average Jaccard index of random graphs

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 4 / December 2024

- Published online by Cambridge University Press:

- 26 February 2024, pp. 1139-1152

- Print publication:

- December 2024

-

- Article

- Export citation

Analysis of

${\textit{d}}$-ary tree algorithms with successive interference cancellation

${\textit{d}}$-ary tree algorithms with successive interference cancellation

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 3 / September 2024

- Published online by Cambridge University Press:

- 26 February 2024, pp. 1075-1105

- Print publication:

- September 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Monitoring antimicrobial resistance in Campylobacter isolates of chickens and turkeys at the slaughter establishment level across the United States, 2013–2021

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 26 February 2024, e41

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Motorized three-wheelers and their potential for just mobility in Caribbean urban areas

-

- Journal:

- Data & Policy / Volume 6 / 2024

- Published online by Cambridge University Press:

- 26 February 2024, e11

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Environmental predictors of Escherichia coli concentration at marine beaches in Vancouver, Canada: a Bayesian mixed-effects modelling analysis

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 26 February 2024, e38

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Shedding and exclusion from childcare in children with Shiga toxin-producing Escherichia coli, 2018–2022

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 26 February 2024, e42

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Connectivity of random graphs after centrality-based vertex removal

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 3 / September 2024

- Published online by Cambridge University Press:

- 23 February 2024, pp. 967-998

- Print publication:

- September 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Subscores

- A Practical Guide to Their Production and Consumption

-

- Published online:

- 22 February 2024

- Print publication:

- 29 February 2024

Generalized Additive Models for Location, Scale and Shape

- A Distributional Regression Approach, with Applications

-

- Published online:

- 22 February 2024

- Print publication:

- 29 February 2024

Medical exemptions to mandatory vaccinations: The state of play in Australia and a pressure point to watch

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 22 February 2024, e40

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Continuous dependence of stationary distributions on parameters for stochastic predator–prey models

- Part of

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 3 / September 2024

- Published online by Cambridge University Press:

- 22 February 2024, pp. 1010-1028

- Print publication:

- September 2024

-

- Article

- Export citation

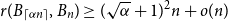

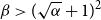

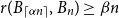

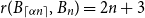

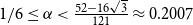

Small subsets with large sumset: Beyond the Cauchy–Davenport bound

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 4 / July 2024

- Published online by Cambridge University Press:

- 21 February 2024, pp. 411-431

-

- Article

- Export citation

Mycoplasma pneumoniae: current outbreak

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 21 February 2024, e47

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Consideration of the proxy modelling validation framework

-

- Journal:

- British Actuarial Journal / Volume 29 / 2024

- Published online by Cambridge University Press:

- 19 February 2024, e2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Applied Probability Trust prizes 2023

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 1 / March 2024

- Published online by Cambridge University Press:

- 16 February 2024, p. 368

- Print publication:

- March 2024

-

- Article

- Export citation

On a conjecture of Conlon, Fox, and Wigderson

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 4 / July 2024

- Published online by Cambridge University Press:

- 16 February 2024, pp. 432-445

-

- Article

- Export citation

JPR volume 61 issue 1 Cover and Front matter

-

- Journal:

- Journal of Applied Probability / Volume 61 / Issue 1 / March 2024

- Published online by Cambridge University Press:

- 16 February 2024, pp. f1-f2

- Print publication:

- March 2024

-

- Article

-

- You have access

- Export citation

Analyzing the multi-state system under a run shock model

-

- Journal:

- Probability in the Engineering and Informational Sciences / Volume 38 / Issue 4 / October 2024

- Published online by Cambridge University Press:

- 16 February 2024, pp. 619-631

-

- Article

-

- You have access

- Open access

- HTML

- Export citation